The Silent Killer of Agentic Platforms: The Traditional SaaS Mindset

Something fundamental is shifting in how software gets built.

There is an abundance of conversations relating to software development — whether it’s vibe coding, AI-assisted development, agentic coding workflows, or other ways of enhancing the job of a developer. But underneath the productivity debates and tooling wars, there’s a quieter change that matters more for anyone building agentic platforms: the engineering discipline itself is changing.

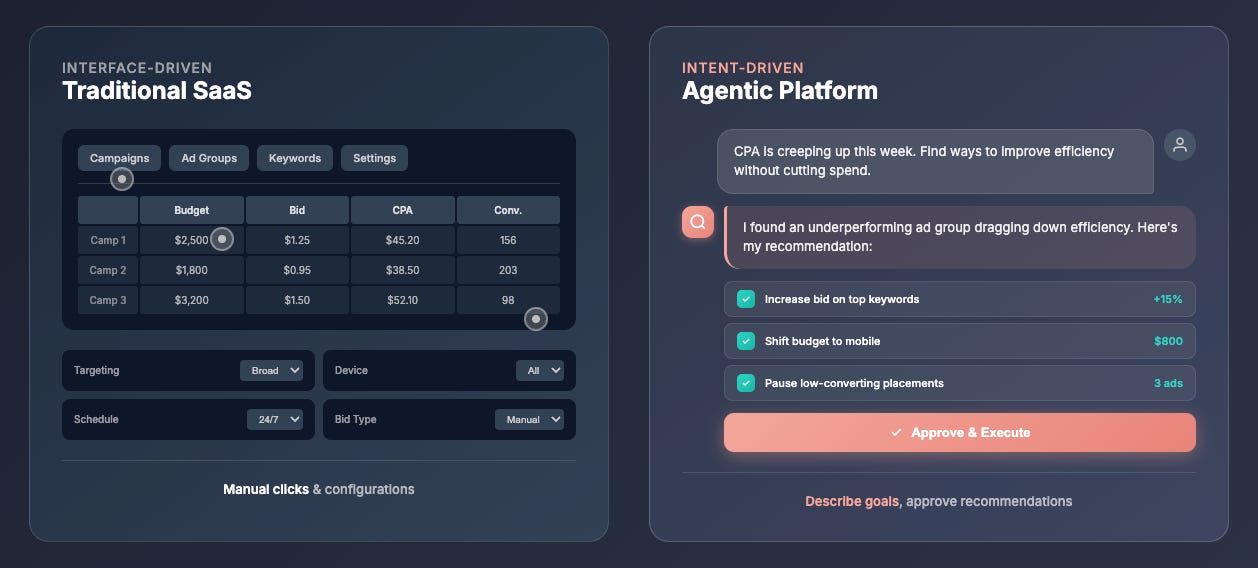

Quick distinction: Traditional SaaS is interface-driven. You click, navigate, configure. The product does what you tell it, click by click, input by input, step by step... Agentic platforms are intent-driven. You describe what you want — the agents figure out how, then surfaces recommendations for approval. Think traditional ad platforms vs agentic media operations. In a traditional platform, you set bids, choose targeting, monitor dashboards, adjust manually. An agentic system analyzes performance, identifies opportunities, drafts optimizations — and waits for your sign-off before executing. Same job. Different interaction model — with humans still in control of the decisions that matter.

This shift in engineering discipline is subtle from the outside but once you dig deeper it’s hard to deny and the struggles derail teams/projects. It isn’t widely discussed because everyone’s experiencing this for the first time and few are open about what’s actually breaking. But maybe it should be. Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027. Not from lack of talent. Not from bad models. From a mismatch between how teams are trained to build software and what agentic systems actually require.

The Shift few are talking about

Traditional SaaS—the kind most engineering teams have spent their careers building—is fundamentally about state management and correctness. You define data structures. You write logic that transforms them. You validate that the output matches what you expected.

Agentic platforms don’t work that way.

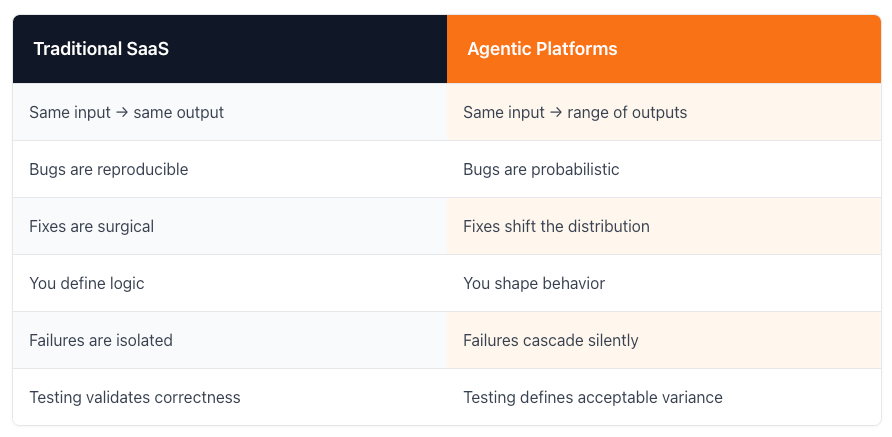

The table above isn’t just a comparison. It’s a list of assumptions your team has internalized over years—assumptions that quietly break the moment you start building agents.

What Actually Changes

1. You stop writing logic. You start shaping incentives.

In traditional software: "If X, do Y."

In agentic systems: Given this context, bias the model toward Y, unless Z appears, while preserving flexibility elsewhere.

Prompts behave like soft constraints. Memory behaves like latent state. Tools behave like affordances, not guarantees.

“You’re not coding outcomes anymore. You’re nudging behavior.”

2. Determinism disappears — and that changes everything.

Run the same workflow twice. Get different results. Both might be acceptable. Or one might be subtly wrong in a way that only surfaces three steps later.

This isn’t a bug. It’s the nature of the system.

Which means:

Debugging becomes forensics, not diagnosis

“It works on my machine” becomes meaningless

Testing shifts from pass/fail to within acceptable bounds

3. Failure modes are compounded, not isolated.

Traditional systems fail loudly. An API returns a 500. A test goes red. A user sees an error screen.

Traditional SaaS failures:

One endpoint breaks

One feature regresses

One object is corrupted

Agent failures:

Memory contamination

Cascading reasoning errors

Tool misuse across agents

Silent drift over time

“Agentic systems don’t break — they drift.”

They degrade. They produce outputs that are technically valid but subtly wrong. By the time you notice, the damage has compounded across multiple steps.

A single “small” change can:

Degrade planning quality

Skew prioritization

Break downstream agents that never changed

This is why agent platforms feel “fragile” even when nothing is obviously broken.

Multi-Agent Systems Amplify This

Single-agent systems already break traditional intuition. Multi-agent systems multiply the problem.

You’re debugging coordination between probabilistic actors — each with their own context, memory, and reasoning patterns.

State management across agent boundaries. Conflict resolution between competing objectives. Orchestration logic that didn’t exist before. These become core engineering challenges.

“You're not designing functions — you're designing roles.”

You're not writing retries — you're establishing judgment thresholds. This is why system designers outperform feature builders, and why understanding the org matters as much as the code.

The Discipline Shift

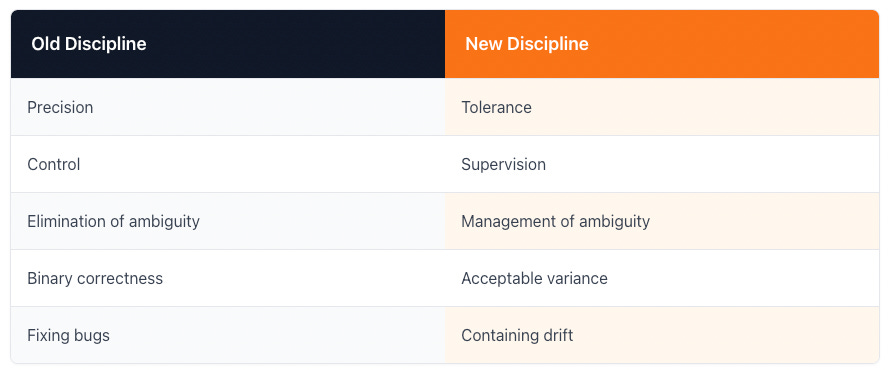

This isn’t about learning a new framework. It’s about retraining intuition.

Teams trained on the first list struggle with the second — not because they lack skill, but because their instincts point the wrong direction. And unlike traditional SaaS, you can't compensate with better tooling alone. Agentic platforms demand deeper understanding of the business, its users, and its edge cases — because that's what it takes to tune agents and their context layers effectively.

The New Skill Set

What actually matters for teams building agentic platforms:

Evaluation design: Defining what “good” looks like when outputs vary

Context engineering: Managing what the model sees and when

Observability: Tracing behavior across steps, not just logging outputs

Risk containment: Guardrails, escalation paths, human-in-the-loop checkpoints

Outcome metrics: Measuring task completion, not just technical correctness

Domain depth: Understanding the business, users, and processes well enough to define success when outputs vary — and recognize when "technically correct" is actually wrong."

The Bottom Line

The shift from Traditional SaaS platforms to Cognitive Agentic platforms isn’t just incremental. It requires different mental models, different testing strategies, different requirements and definitions of success.

Most teams feel something is off before they can articulate what. They ship agents that work in demos but drift in production. They debug for hours only to discover that fixing one agent has quietly shifted behavior across the entire system. They build confidence in isolated tests that doesn’t survive real usage.

“The teams that survive this shift won't be the ones with the best models. They'll be the ones who recognized the discipline change early — and built the domain knowledge to navigate it.”